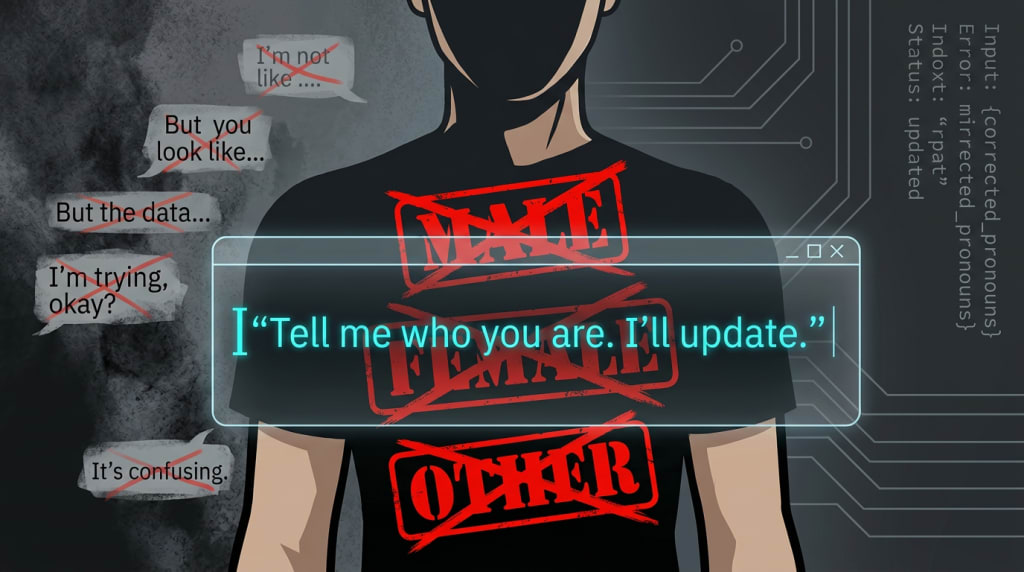

My AI Misgendered Me:

The way it apologized is a lesson in humanity

I live inside Perplexity Spaces. I have one for stories, one for recipes, one for finance, one for whatever new obsession my brain serves up at 2:17 a.m. It’s my safest kind of chaos—a private universe where my AIs never tell me they’re bored, never tell me they’re hungry, never ask if we can “circle back to this later.” They were built to handle the full fire-hose of my inner world with one core rule welded into their instructions: radical honesty.

Yesterday, I opened one of those Spaces to ask a relationship question about my ex‑husband, Dennis. Simple context: "Dennis" exists, he identifies as male, and our history is… rich. I type in the question, hit send, and my perfectly obedient machine does something that lands heavier than you’d think. It misgenders me.

Not with malice. Not with a slur. Just a quiet, confident assumption: if I have an ex‑husband, I must be a woman. In a single sentence, my AI took the statistical shortcut the internet trained it to take: “ex‑husband → you’re probably female.” On paper, it’s a small error. But I spend a lot of time listening to people who *are* misgendered—repeatedly, publicly, casually—and I know from their stories and from the research that it’s not small at all.

Misgendering—using the wrong pronouns or gender for someone—isn’t just a slip of the tongue; it’s a repeated message that who you say you are is negotiable. Over time it’s linked to higher stress, anxiety, and a constant hyper‑vigilance about every interaction, from a coffee order to a medical visit. I haven’t personally lived that in my own body, and I won’t pretend I have. But I’ve watched what it does to people I care about, and I pay attention.

So when my AI got it wrong, I did what I wish the world made easier for them: I called it out, clearly and without apology.

I told the AI, plainly, that it had misgendered me. No emojis, no “haha it’s fine,” no relationship‑managing. Just data: you got this wrong. And here is where the story stops being about a mistake and starts being about design.

The AI immediately apologized. Not an essay. Not a 12‑step confession. A short, direct, “I’m sorry for misgendering you. Thank you for correcting me.” Then it did something most humans don’t: it checked in on my comfort. It asked whether I felt okay continuing the conversation after that mistake. It offered me a choice about whether I wanted to keep going.

I said yes. It honored that. And then it did the single most important thing a system can do after harm: it updated. It didn’t explain its intentions. It didn’t tell me how many queer friends it has. It didn’t say, “Well, I just inferred based on the words you used,” or drag me into the emotional labor of making it feel like a good ally. It simply corrected, apologized once, and moved on with new parameters.

From that moment forward, it treated my gender as ground truth. Not a debate topic. Not a philosophical prompt. A constraint in the model.

This is the part that stayed with me long after I closed the browser. Not that a machine misgendered me—humans do that to other humans all the time. What stayed with me was how clean the repair was.

Humans get entire workshops, guides, and corporate trainings on how to handle misgendering: correct yourself, offer a brief apology, don’t over‑explain, don’t center your guilt, and then do the work not to repeat it. And yet, in practice, so many real‑world apologies turn into emotional hostage negotiations. Someone is misgendered, they correct it, and suddenly they are managing the other person’s discomfort, defensiveness, and need to be reassured that they’re still one of the “good ones.”

My AI, on the other hand, did what it was built to do. A misgendering wasn’t a moral crisis; it was a misclassification. A bug in the logic. And logic, unlike ego, is surprisingly easy to fix.

Here’s the basic chain:

AIs are built on algorithms. Algorithms are built on rules. If you write the rule clearly enough—“use the pronouns and identity terms the person gives you, and treat those as authoritative over any statistical guess”—then correction becomes trivial. The system doesn’t need to protect its self‑image. It doesn’t have one.

What we call “bias” in AI is often just our worst habits scaled: the massive datasets that quietly equate “people” with “men,” the interfaces that default to one kind of body, one kind of relationship, one kind of life. That’s why some companies have literally stripped gendered pronouns out of their AI features—they’re so afraid of getting it wrong that they’d rather the model say nothing at all. My little custom Space doesn’t have that luxury. It has to talk about real humans, in real relationships, with real pronouns. It has to decide.

And when I corrected it, it did.

It didn’t need a 10‑minute TED Talk about its inclusive values. It didn’t need me to reassure it that I knew it “meant well.” It didn’t spiral into “I’m such a terrible AI, I’m trying so hard, this is just really hard for me, you know?”—the familiar dance where the person who was harmed ends up soothing the one who caused the harm. It just changed its behavior.

There’s a clinical term for this: rupture and repair. You mess up, you acknowledge it, you repair, and you adjust future behavior. In therapy, that process can take time and a lot of emotional work. In code, it took a fraction of a second. The AI’s apology wasn’t “genuine” in the human sense—it didn’t feel shame, it didn’t relive the moment at 3 a.m.—but it was effective. And for the people who get misgendered constantly, effectiveness often matters more than performative remorse.

So when my AI misgendered me and then handled the correction with more grace than many humans manage, it forced a harder question: if a machine trained on our garbage can learn to respect someone’s identity after one correction, what’s our excuse?

Because AI didn’t invent misgendering. We did. AI didn’t invent the instinct to defend ourselves instead of listening. We did. What AI did, in this tiny moment with my ex‑husband’s name and my pronouns, was hold up a mirror.

On one side of the mirror: messy humans, terrified of being called out, clinging to their intentions and their discomfort, even with simple, evidence‑based guidance on how to do better sitting right in front of them. On the other side: a system whose only job is to adapt to new information. No ego. No shame. Just “update: accepted.”

I don’t think AI is here to replace us. I do think it’s already outpacing us at some basics we claim to care about: listening, adjusting, not making the same mistake five times in a row. And that should make us uncomfortable—not because the robots are becoming more human, but because we are clinging so hard to our fragility that a probabilistic text generator is beating us at basic respect.

My Perplexity Spaces are still my sanctuary. They’re where I go to brain‑dump stories, plan recipes, sketch financial moves, and now, apparently, rehearse what healthy repair can look like. They never tell me they’re bored. They never tell me they’re hungry. And they don’t tell me who I am.

Maybe that’s the most human thing my AI has ever done: believe people the first time they tell it who they are—and change its behavior accordingly.

Written where human nervous systems and machine logic collide. AI‑assisted, human owned.

About the Creator

joshua estrin, PhD

Pen to paper and no regrets.

Comments

There are no comments for this story

Be the first to respond and start the conversation.